Scope

This document should serve anyone with curiosity related to the following, as we’ll cover:

- Basic protein knowledge (no promises), meaning the field of “proteins” as a whole (with) elaboration in many topics (branches) that are of potential relation with the main topic in case you’ll be delving further in that field,

- The Protein Problem Statement(s), along with their plausible routes,

- Core concepts of the architecture of “Alphafold 1” by DeepMind. Codebase and illustration into fitting the data to a (custom) model in separate modules, also called Machine Learning,

- And lastly what could be used to extend the idea itself of AlphaFold 1, as well as questions that have incurred to me personally while attempting to understand the architecture motivation and the interconnected nature of everything. These are thrown throughout the document as “insights” and in the (relatively) simple methodology of demonstration itself. However, it is favorable for the reader to absorb it however they like.

At the Engineering section, everything is wrapped with a “before in, and out of each component pass forward” in the favor of better comprehension of the map. There is also a Building up from the smallest to the biggest protein component that serves a bigger picture on what we’ll be dealing with for later stages, as well as solidify our understanding towards each components role.

Extension

While there will be a thorough study towards AlphaFold 2 and other architectures, some of the notes will be left as possibilities for extension for oneself to exercise the muscle of “How can we improve this?” and “What is this prototype doing inefficiently?”, prompting the reader to delve into the material and realize they’re potentially passing an important, guaranteed questions that are answered in later architectures (something that is built upon; AF2 and AF3 for example and others). If you have expertise in anything related here, don’t forget to leverage it!

A prototype architecture would contain several distinct modules, similar to the idea of “making it happen and perfect it later”

The reason (we) chose AlphaFold 1 (with extension potential mentioned in Foreword) and not its modern variants (AlphaFold 2’s breakthrough) is due to the foundational approach towards the problem at hand. The extensions are the iterative enhancement of the inital architecture (e.x. by introducing granularity), hence becoming the foundation for many others.

All intentions here lie on gaining intuition that will matter later on either on the same exact task and the elaborative extensions, or the in different disciplines (the science of adaptability). Hopefully that will serve as your “purpose” in reading the document at hand.

Considerations:

- This may be published before polished, maybe even before folding completely; meaning that some text are also thoughts immediately jotted down, which will be separated from practicality with each revision.

- Some sources are also linked partially or fully, even though they’re not the entire source in many of the text linking it.

- The document may be separated due to how large it is currently, but there will be attempts in making them fit to keep it holistic and central. Implementations will follow the Pytorch version of the repository here whilst adding paper evaluation and analysis if available. This codebase is for inference purposes only. No training happens anywhere.

- Lately, as of updating this in March 31st, the original paper published in nature was available through a public access token provided by the CASP13 repository authors. Now, that token only provides a preview of the paper, giving the first page of the publishing. It’s saddening how older research papers are locked behind paywalls, or even worse, how it was presumably available then later locked.

Any feedback of any form is welcome.

Brevity

To avoid repeating “AlphaFold X by DeepMind”; AFX is the norm,

AminoAcid abbreviation of “AA” which is used synonymously with “residue” or “res”,

along with the abbreviation of Angstroms “Å”: “Ang.”

Reinforcement Learning: “RL”

ProtienDataBank: “PDB”

….

Other abbreviations are made on-spot.

There are also cases for alternating between them for clarity and intuition.

Foreword

There are many architectures that have been placed in the record for the “protein folding” problem, going with “AlphaFold” by DeepMind:

- AlphaFold 1, which is our main topic;

‘Alphafold uses advanced biotechnology and AI to help determine longer protein structures over a shorter period’. This is the simplest algorithm (least devouring of data as well as the complexity threshold), but is the most non end-to-end “solution” (several components and “externalities”), which also lays groundwork and first-hand intuition on the problem statement. - AlphaFold 2 and its extensions: 2.3, and multimer which emphasizes a minor role in protein-protein interactions (some improved IDRs capturing capability), but it did well in the static protein-protein complexes (see AlphaFold | EMBL-EBI Training as well as for later purposes, this link here).

- AlphaFold 3, which is abit out of the current scope: Prediction of interactions not just of proteins, but also DNA, RNA, small molecules (ligands), ions and chemical modifications.

Purpose

Understanding why proteins fold is important towards understanding the problem statement, along with the motive of this entire interaction between the many fields, mentioning some:

- Biochemistry in the interactions between the side chains and many others,

- Bioinformatics and genetics relating to MSAs,

- Artificial intelligence; convolutions, attention, Evo-former, diffusion, etc,

- Structural Biology as in the decades-old dataset of PDB and experimental methods.

- Medicine and Biotechnology, which are currently the primary beneficiaries of a viable solution (which is greatly variant too). Think of it as the biggest “To-dos” after a breakthrough, using the insights derived from the other fields to:

- Accelerate drug discovery. Drugs typically work by binding to specific protein targets in the body (a receptor on a cell, or an enzyme in a pathogen). So, if we know the precise 3D structure of a target protein, we can design small-molecule drugs that fit perfectly into its active site. See thisand that!. Related to Cancer

- Understand and treat diseases along with predicting disease-causing mutations, as misfolded proteins are associated with a range of severe conditions (particularly neurodegenerative like Alzheimer’s, Parkinson’s and Huntington’s) as well as some cancers. So by understanding how they misfold, we can design therapies to stabilize them or maybe enhance the cells natural quality control systems, or maybe even prevent the formation of the toxic aggregates that starts them all. Along with others mentioned in the Disease prediction section.

- Synthesize more proteins. Maybe designing industrial enzymes that can break down plastic waste, creating more resilient crops, and many other. This is probably the most understated yet most potent goal.

Summary:

In AF1, we deal with a single protein, predicting the fold. Then, as the extension(s) occur, we deal with complexes in AF2’s multimer (along with single proteins in AF2 ver. 2.2). Lastly, we expand our capabilities of the two mentioned structures, all to view the wide range of biomolecules and predict their structures also. See more below on AF2 and AF3 advancement over AF1. Any relatively interesting detail will be added along the way.

The Problem Statement

The “protein folding problem” is actually three parts:

- The folding code (Thermodynamic questioning). Basically all the explicit physical interaction rules that determine which folded state is “stable” for a given sequence.

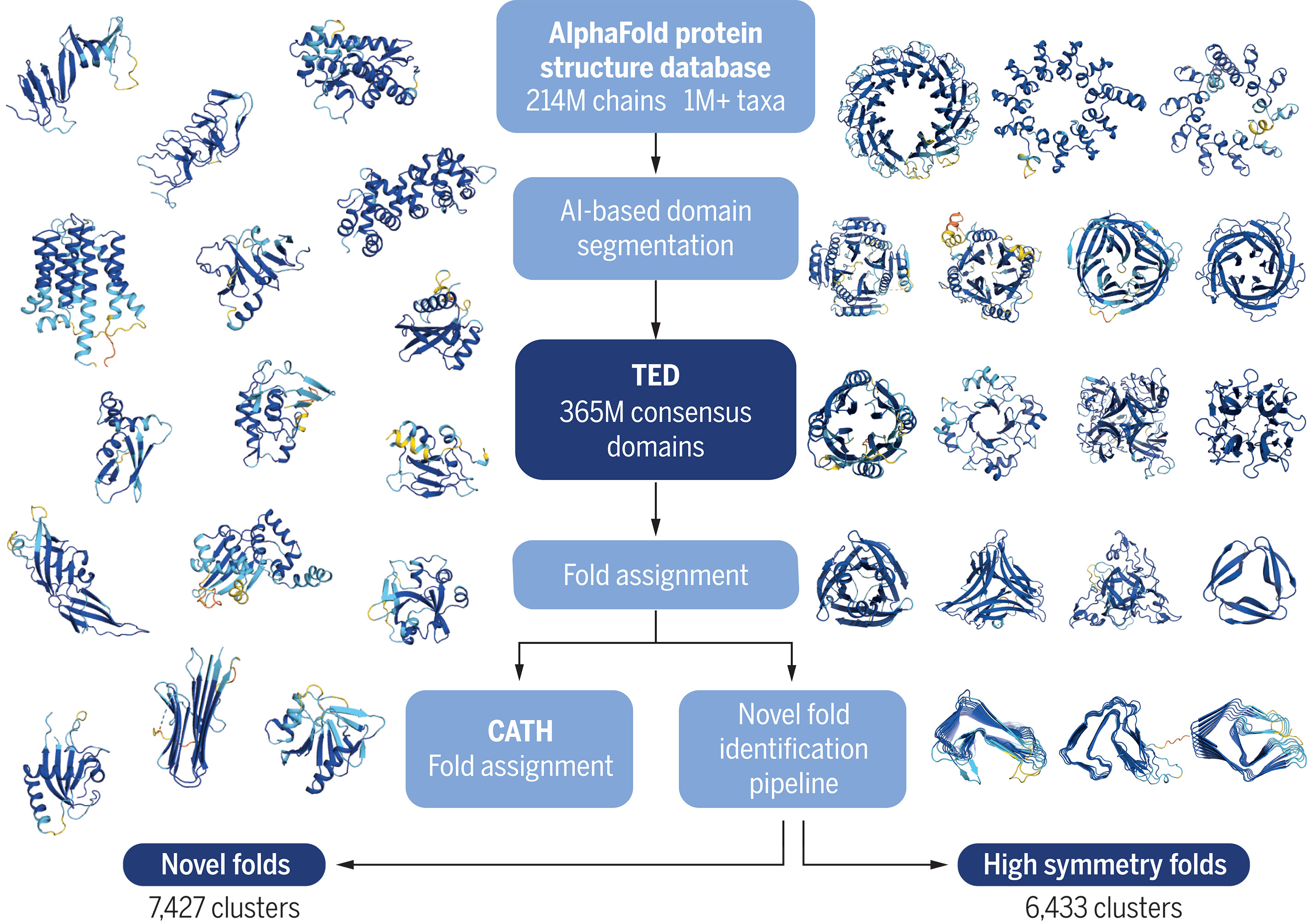

- Protein structure prediction. The static, single structures has been already determined of millions of proteins and are stored in the AlphaFold Protein Structure Database ‘AFDB’. This provided the blueprint by having a static map of nearly all known proteins.

- Understanding the physical process and mechanism by which a protein reaches its folded state in real time (which is largely open kinetics); including the intermediate states, pathways, chaperone assistance and misfolding. Meaning that we have a goal towards understanding “why” proteins fold the way they do. This question is abit out of scope, but we do address the misfolding and many other disease-causing mutations in a later section (Disease prediction)

With the evolutions of AlphaFold 1, the core stayed constant; you get one frozen pose with trust scores.

AlphaFold generally goes with (2) without having explicit inputs of the folding code (1) nor attempt to explain the models outputs (3). There are, however, some current case(s) of utilizing the explicit inputs of the folding code (used here in AlphaFold 1) indicated at Outputs, as well as later in AlphaFold 3s input.

Regarding (3), which is slightly technical; one line possibly be examined are in the “weights” themselves regarding a machine learning interpretation. Athough it is currently a black-box when relating it to AlphaFolds complex architecture that weights aren’t as easy to interpret, that is, if we attempt to understand the algorithms output (probably a first-approach is finding a heat-map of activations just like how we did in CNNs and attention as indicated in the image below, but it is more complex the deeper the architecture is).

Source; Linear combination of the weights and feature maps to obtain the class activation map. It is also possible to build an XAI (Interpretable AI that its internal logic is understandable) to explain the currently epistemically opaque weights of the complex architectures. Either ways, it is definitely out of scope and may be included in future extensions.

In other words;

“AlphaFold” in general predicts the final structure, but it does not fully explain the dynamic process of how it gets there in a biological system, nor does it address the other protein formalisms highlighted at Formalisms and evaluation.

Cancer

The general things that go wrong, across all cancers:

1} Growth signals get stuck ON (oncogenes, like "KRAS", "EGFR")

2} Brakes get broken (tumor suppressors, like "TP53", "BRCA", "RB")

3} DNA repair fails, so mutations accumulate faster

4} The cell hides from immune surveillance

5} The cell recruits blood vessels to feed itself (called angiogenesis)

6} It spreads (called metastasis, which is the deadliest stage of cancer)

Every Cancer hits some combination of these. Since Cancers are usually your own cells, and the immune system is trained not to attack your own kind (preventing other disease called autoimmune, which is still present regardless), therefore, some clever workaround targets the “neoantigens; which are mutation-generated proteins that are foregin enough (by also enough training) to not be categorized of your own kind, hence being a drug-worthy candidate.

For decades, proteins like KRAS or MYC couldn’t be targeted because we had no structure. No structure meant no rational drug design (setting aside drug-resistant cancers). AlphaFold filled in thousands of these (see also AlphaFold Protein Structure Database ‘AFDB’). AlphaFold 3 extends this to predict interactions between proteins and other molecules including DNA, RNA, and small molecule ligands, which means you can now at least see a binding pocket that was previously invisible.

Stem cells potential (Sounds interesting for later!)

Small note

Experimental techniques in capturing the protein itself; as in the use of “crystallography” (the main experimental technique) and other techniques. Crystallography is an accurate way of figuring out the structure of proteins, but what about the other techniques? In the bigger picture, this is just a “branch” of many thinking streams.

In the next formalisms section, there is a “realization” of using some of these experimental techniques in solving the current frontiers of problems revolving around the protein.

Another branch of thinking lies on the potential of a Machine Learning application towards these traditional techniques. So a Machine Learning algorithm to be used as an “Aid’er” or more professionally, a “Helper algorithm” in these techniques themselves. So besides a ML framework taking the core idea and digest (AF1’s case, also many feature engineering), we’d have it as a secondary component that aids in the function of the core technique in question (or experiment if we’re specific).

It also takes some form of delusion, too (mind you), as this is useful as a possibility opener if one is truly passionate about proteins and can open DOORS one couldn’t have IMAGINED!! (yes, yes, bla bla) as long as it doesn’t distract one from pursuing a single technique to their subjective creative limits, or however your working philosophy may apply.

Formalisms and evaluation

Some highlights in bold are of relative significance.

So, we conclude that:

Proteins are dynamic, not static: they’re constantly moving, twisting, changing shape in a biological environment. So when they perform their functions, say, binding with other molecules for example, any “Alphafold” predicts one molecular photo, similar to a static shot of a protein folded. But since proteins are dynamic and aren’t yet captured by any “AlphaFold” model in particular, mentioning some more of the dynamics:

- Transition states: The specific intermediate shapes it temporarily adopts moving between stable states (example being its interpolation between “open” and “closed” form).

- IDRs (Intrinsically Disordered Regions): Some parts of proteins are naturally and inherently unstructured and flexible till they bind with their partners. This is actually crucial to interact with a wide range of client proteins. Not to be confused with IDPs which are Intrinsically Disordered Proteins. More about it below.

- Real-time atomic fluctuations: Constant and rapid vibrations of chemical bonds and residues.

Some experimental techniques in finding the structure of the protein itself are NMR, Spectroscopy and FRET, along with molecular dynamics simulations that do DESCRIBE the conformational changes and the continuous flexibility and movement of the proteins in their natural environments (data we can use later). All of which would be key in figuring out not just one possible conformation, but all of their potential conformations per protein (one protein or a single “polymer”) or complex (multiple proteins… the computation outlook seems large). These experimental techniques methodology revolves around stacking multiple protein structures (ensemble) and transitioning between them from rapid atomic vibrations to slower domain rearrangements…

… Which is somewhat related to Markov’s formalisms of “Partially Observable Markov Decision Processes” in Reinforcement learning, where we also (generally) stack frames when this problem arise (again, for later! And this is not the only method!)

Back at the experimental methods, there is a foreseeable future of datasets containing these just like PDB and such. Well, there is apparently a method in combining multiple datasets, as well as developing NMR-only datasets like the one mentioned here. The only thing we can do is wait for more NMR dataset(s) instances… unless we find out a faster technique or use what we have now (including the NMR or not).

It is also worth noting that the protein folding process isn’t a one-time event.. It is a dynamic and often reversible process. Some proteins need the help of other proteins to fold (chaperones), some unfold (denure) physiologically (like the natural process of protein refolding in muscles to allow muscles to stretch and recoil), others unfold to certain conditions. Amazing!!

As for cancers: A huge fraction of cancer-relevant proteins (p53’s transactivation domain, MYC, many transcription factors) are Intrinsically Disordered (IDPs), meaning that they don’t have a stable structure. They fold only when binding a partner, or they exist as dynamic ensembles. AlphaFold gives you one conformation, which for these proteins can be actively misleading. NMR and MD simulations are still the honest tools here.

The functional and interactional formalism: which would describe the proteins activity within the broader cellular context, interacting with other molecules and their surroundings to preform specific biological functions (Protein-Protein interactions, Ligands, DNA: Which all seem to be partially or fully the target of AlphaFold multimer and Alphafold 3. They’re still not following the first formalism, though). It would involve:

- Network dynamics: One a proteins action influences the behavior of an entire cellular pathway or network of interacting molecules.

- Kinetic properties: The rate and speed of biological reactions and interactions (enzymes kinetics, etc)

- Allosteric regulation: The binding at one side on a protein affects the function at a different, distant site by changing the proteins shape along with its dynamics.

- Environmental effects like solution conditions (pH, temp, salt conc) and presence of other molecules (ligands, other proteins) on the overall behavior of the protein.

There are also point mutations, which implies a single AminoAcid mutation that caused a structural defect. Similar to a pawn being promoted to a queen in chess that wrecks havoc on the advantage bar, which is maybe related to Reinforcement learning, too. This is also similar to the Network dynamics and allosteric regulation. Point mutations are the trigger of an ensemble of cascades of events. These are the structural basis for diseases, more on that at the Disease prediction section.

It is advised to re-read this section after we’re done with the biology and the next implemention section.

A Small Biology Lesson:

For the motive, the knowledge of biology or in any other discipline is required when attempting cross-disciplinary attempted solution implementation of a foundational problem in that discipline (the 2nd protein folding problem).

Proteins are made from instructions made by the DNA, and the RNA (the messenger of DNA). It, the protein, then folds to a 3D structure to do a specific function in the body. The structure itself is destined by a mostly deterministic process (i.e. native conformation).

More on that at PDB bias section.

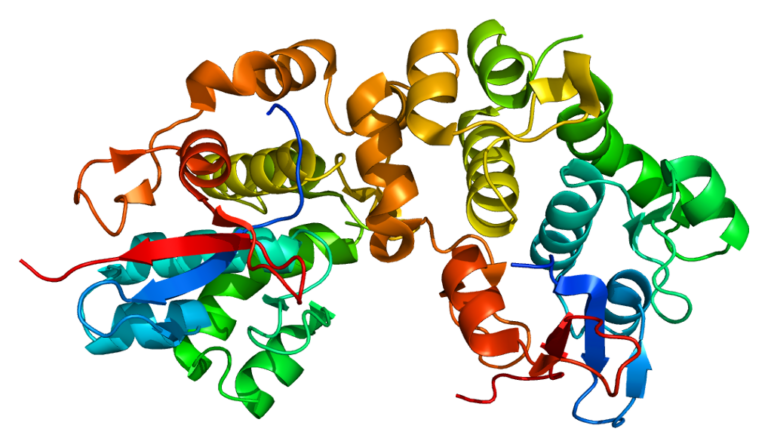

Source; an image of the DHRS7B protein (dehydrogenase/reductase 7B) created using Ribbon diagrams and rendered with PyMOL. This is not of concern now!

The AminoAcid sequence is the linear order of amino acids in a protein chain (referred to as the backbone or the “main chain”), meaning that we’re currently hitting the main component of the protein structure. Which is also the 1. Primary Structure of the protein

AminoAcids (AA’s) are made up of a carbon atom with a carboxyl (COOH, acidic) and an amine group (NH2, written H2N which is a base) each to the center (the carbon). The north bond being the Hydrogen on the north, and the last bond (south of the AA, called R group) would be either of the side chains. Please don’t mind the aforementioned pole directories

Source: Veritasium; attempting multiple different side chains, which are later binded to other AAs forming a linear chain (that is different from a side chain). In the final picture, that straight line represents a linear chain of the carbon (center of the amino acids!), each bound to a side chain [till they form a protein or become part of a complex?]. This serves significance when fidgeting with the data or a part of the larger intuition as a potential exploration area of interest.

This random choice between different side chains ends up forming the 20 AA’s that exist in nature, not taking into account the unknowns, and the rare AA’s. They’re encoded in Alphabetical names, such as “U” for the Selenocysteine AminoAcid (there are some not encoded with their respective starting word). The unknown one(s), or harder to distinguish between other AminoAcids are as following:

B(Difficult; may be aDorN),Z(Difficult; may beEorQ),J(Difficult; may beLorI) and finally:X(Undetermined or any unknown AA. Very interesting).

Along with the rare AA’s, which are the AA’s no. 21 and 22 respectively:

UandO

Some of these are not needed in human trials, for example the O, found in certain archaea and bacteria. Unless there are specific needs or research to be conducted on these organisms… This information is useful in knowing what AA’s we can model vs what we currently use to predict the final snapshot (we use the 20 known AminoAcids)

You can call the 22 AA’s “Proteinogenic” AminoAcids, which implies that they are used for building the protein from the sequencing and folding process. It’s important to note that there are over 500 naturally occurring AminoAcids that have been identified, in which case are called “Non-proteinogenic” AminoAcids. They are beyond the scope of proteins and the alphabetical order, meaning that they do not become “proteins”, but they serve other biological functions.

For the first question that came through, yes, there exists a potential of creating newer AminoAcids. However, they are being researched by modifying the R group (the “legally” modifiable part). Unless you modify the core parts (COOH and NH2), in which the AA may not be recognized by the cells machinery (no longer an “AminoAcid”).

Source: Sequence determines structure then structure determines function — and .. Oh, wait:

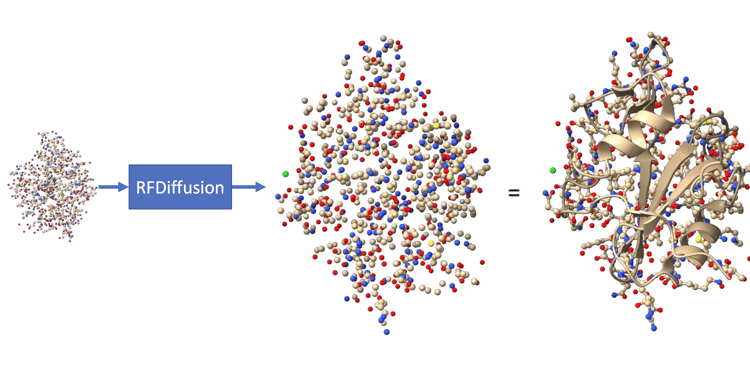

In this current decade, we can and are able to “create” proteins for specific functions that we dictate for a model to create a 3D protein (extremely cool I know!). They use the Proteinogenic 20 (sometimes the other two) for this, not by creating new AA’s. The process is very similar to creating images using AI, Briefly; having noise at the beginning, then iteratively removing the noise to generate a new protein structure that is a “plausibly folded structure”, maybe even incorporating a novel proteinogenic AminoAcid (engineered) in the diffusion process (or other parameters like symmetry specifications and length ranges).

Keep in mind that this is a generated backbone, and we still don’t know its sequence of AAs.

Source: An amazing article about the De novo protein design of RF diffusion. They also have an amazing table on the protein-design workflow!

Small note: It is very similar to predicting the next word in a sentence, where we iteratively remove the noise, and the model starts seeing patterns linking the text of description we gave (tokens) to its embedding space tokens. Its also similar to seeing patterns out of a seemingly redundant static noise. Nonetheless, It is deemed interesting but slightly out of scope.

Source: The amazing Rf diffusion!

There are more nuances to that, such as using ProteinMPNN to design a sequence of AAs that is most likely to adopt that specific 3D shape, then use AF2 or AF3 to verify the structure viability (sanity checking if it will fold back into the designed structure) before costly lab synthesis begins, that is, if we’re attempting to create the protein. At the AlphaFold Protein Structure Database ‘AFDB’, there are further elaboration on diffusion as well as AF3’s generative approach towards the 2nd prorein problem.

Psst! This model is a fine-tuned version of the RoseTTAFold structure prediction network (similar to AlphaFold)! Check out BakersLab!!

[More on that is a potential for integration in this article]

Building up from the smallest to the biggest protein component

The levels of protein structure are as follows:

1. Primary Structure

These are the most fundamental level of “structure”, which refers to the linear sequence of AminoAcid themselves. The covalent bonds (Called “peptide bonds” here, linking an AA with the other… sometimes disulfide) are keeping them intact (more on that below). Contrary (opposite) to the interactions other than that, which are to be considered “absent” (not affecting) within the primary structures stability and integrity under stress.

Source; The AminoAcid Chain

There are also conformational freedom, which are done by the partial double-bond, which are also called “trans-conformation” of the peptide bond between two AA’s… and its strength is somewhat between single and fully double bonds. What matters here is that the angle is nearly always fixed at 180 between only two AA’s, conveying a planar structure. So the bond has the proteins on a “strong approximation” set of an absolute physical constant angle called 𝜔 (omega).

We may need only the AminoAcid sequences as a “guide manual” in the folding process, which is due to the mass-feeding of the data to the target model (in which it earns the intuition and pattern-recognition from thousands of samples). This proved to work in the case of OpenAIs GPT-3 and many other LLMS when scaling complexity and data, till reaching a certain plateau that requires a redirection to innovating newer architectures or maybe re-framing the current use of what we have. This is highlighted as the “information bottleneck”.

2. Secondary Structure:

These are alpha helices and beta sheets, which are defined by specific, repeating patterns of . The secondary structure of a protein is related to a type of feature-in-interest (in the note below) more than the primary structure.

The Carbon (C𝛼) backbone are bonded with NH2 (single bonds, flexible) and have an angle, which are called Phi ϕ torsion angles, while the Carbon (C𝛼) and COOH bond angles are called Psi 𝜓 torsion angles.

SS is defined by specific, repeating patterns of the PHI/PSI angles along the backbone of the polypeptide chain. The values of these angles determine the overall conformation of the backbone in a specific region, thereby, it dictates whether that region forms a helix, a sheet, or a random coil.

Source: The main chain, called the Cα coordinates (left), as well as the side chains (right)

Source; The “Ramachandran plots” are plots defining the sterically allowed conformations of the PSI/PHI angles so the atoms wont go crashing on each other. Helpful in limiting the possible conformations, maybe better early on as an initialization technique or creating strict bins.

3. Tertiary structure

Since the tertiary structure is the overall, complex 3D shape of a single polypeptide chain (and the one AF1 attempted to “solve”), which includes all the secondary structure elements and how they fold; there is a conclusion that the proteins tertiary structure (the 3D fold) is determined by the interactions between the AA side chains (R group) and between other side chains with other! Their interactions (more on interactions below) cause the stabilization of the 3D shape. There is also an influence between side chains and the atoms of the peptide backbone.

4. Quaternary Structure

For the last structure, there is Quaternary protein structure (complexes), which has proteins consisting of the (1), (2), (3) structures, called now “subunits” (two or more proteins in the entire complex) that we mentioned.

Summary:

Source: Honestly, an amazing demonstration for the bigger picture view, for it to potentially have some terminologies in brackets like pleated sheets should be “Beta Pleated sheets” but that’s only to aid in learning structure terminology and avoiding unnecessary complications.

A very important part of structure are Domains in proteins, which are independently folding parts and are explained later for tandem with future Multiple Sequence Alignment.

The predictors of the tertiary structure

In our static protein structure prediction problem, we can safely say that the strongest predictors are their primary structure, the sequences. It is a deterministic way of finding out the final 3D structures, even though sometimes some proteins have different AA’s and still incur some attributes that of a different AA sequence (see 2 MSAs homologs). Their properties also matter:

The order of AA’s: Which is the primary structure predictor. This contains all the information needed for the protein to spontaneously fond into its correct, functional 3D shape.

The second property and it is the types of AAs.

The third property is the number of AA’s, which defines the overall length of the chain. This is relevant because it defines the size and complexity of the resulting structure. The longer the chain the more opportunities it has for folding.

These two properties together (types of AA and their order) are crucial as they dictate which interactions can occur. As stated on The Problem Statement, the folding code (1) are the explicit rules of folding in one of the two interactions; the predominant non-covalent interaction(s): hydrophobic effects, H-bonds, van der Waals forces and salt (ionic bonds) bridges. They solve the core question of “Why is this folded state energetically favored, and not the other states?”

Source: Van der Waals, as well as Solvent Accessible Surface Area (more on SASA/ASA at the B. Auxiliary/Intermediate Loss Functions)

They are weak individually but act together (thousands of them!) to stabilize the protein. Their drawbacks is that how easily they’re disrupted from heat or changes in ph. We also assume no extraordinary environmental conditions as mentioned in PDB bias.

The other type of interaction is the covalent interaction: disulfide bridges (or bonds). These bonds are stronger than the former mentioned non-covalent bonds. There is already a foreseeable potential of its aid in the Disease prediction section, maybe also a previous section that you can connect to.

These interactions as a whole put us on the importance of the 2) MSA. Which has one sequence far away from the other (in the same primary structure chain, speaking of a single protein) that are also touching [Important finding!]

The interactions were not explicitly fed to the algorithm as it implicitly learned them. Maybe that’s for the best, as sometimes exploration (Reinforcement learning) is more important than being given foundational knowledge. It is learned implicitly through the scaling of the network and data. If the algorithm doesn’t converge

One other predictor of protein structure are Multiple Sequence Alignments 2. MSAs homologs which are explained later and is the main signal used in AlphaFold 1.

Keep in mind that we have protein (or related) “lessons” also scattered around the document, as some information will be pulled when required.

Now, let us us demonstrate the popular dataset at hand:

PDB

The central Protein Data Bank has explicit entries of:

- The 3D atomic coordinates (fundamental target data for later) which allows the visualization of the final static snapshot of the folded protein. This is a very precise X, Y and Z location of every non-hydrogen atom (sometimes ( * ) hydrogen) in the resolved structure.

Source: Fun fact, this data structure in PDB has changed in ID’s for 5 years consecutively. I believe from 4 to 5 to 12, but it’s a great reminder that datasets do change.

- Bound Ligands/Molecules which are coordinates of water, ions (zinc, magnesium), drug molecules that are bound in the structure. They are non-proteins that were co-crystallized or co-solved with the proteins. May be useful in the deeper aspect of The Problem Statement

Source: Amazing “big picture” image illustrating ligands and molecules interactions

Multiple conformations (limited): NMR ensembles are models, meaning a small set of 10-20 representative coordinate sets

Experimental metadata:

- Experimentation methodology: X-ray crystallography (traditional, accounting for ~87% of all the PDB archive), NMR, and finally Cryo-em (Cryo is advancing rapidly!). These are the big three used in PDB. There are still other techniques used like SAXS, CD and other. Maybe also the experimental conditions that are crucial as indicated in the Formalisms and evaluation section

- The resolution or quality of the data, where higher resolution indicate a better “zooming in” the protein to identify the structure and its fine details. The higher the (Ang.), the lower resolution that we get. Similar to a pixelated photo, you can see the backbone, but not the individual parts like atoms and side chains and the opposite applies here.

- R-factor and R-free, B-factors, clash score, RSR and other. Check out the amazing documentation BY PDB assessing the quality linked here

- Source organism, and other data like sample preparation and refinement statistics.

( * ) Hydrogen being “sometimes” in the data is due to its weak signal and experimental limitations. They can be also too predictable, making it “wiser” to omit them (increasing computational efficiency) even though they are the crucial for protein folding and function.

The potential of application (of the quality and metadata) relies on their ability to be ingested to the Neural Network (NN) and to reduce their weight, as the less accurate the experimentally decided structure is the lower value we have on its potency to the predictions outputted. Surface side chains, disordered regions and flexible loops are other examples on weak or no experimental data due to their inherent motion or disorder in the experiment. They can be useful, however, if we were trying to innovate something here…

Most of the data used in AF1 should be derived.

Research: Changing to a more granular prediction

Remember the second formalism of proteins, the one about environments?

We have data from the infamous ProtienDataBank (PDB), in which the experiments in it (commonly) gotten from the X-ray crystallography has an environment which probably misses some important details:

- Non-physiological PH or salt conc. (These were used to force crystallization)

- Low temperatures (almost always, unless specialized experiments. Room temperature is increasingly becoming the norm here)

- Absence of binding partners or cofactors that normally are present

- Dehydration, etc

PDB bias

*This follows the aforementioned Formalisms and evaluation. It also uses “AF1” (our target understanding algorithm) to demonstrate the use of PDB. Targeting the missed second formalism as well as other notions of an “accurate protein structure prediction”

Protein folding, as a problem statement in itself is both a deterministic (somewhat NOT random) and stochastic process (somewhat random). Its determinism comes from the AA’s specific sequence, as most proteins with the exact same AA sequence have obviously the same shapes (scientifically, the “lowest-energy state”), while the environmental factors and random thermal motions (pH, ions, ligands, etc) add the stochastic elements in the folding process.

AF1 uses the deterministic side of the equation. In other words, it assumes a stable environment and specific laboratory conditions. At the worst case scenario, there there will be misfolding, and all the bad things could happen if a protein is introduced to an extreme environment.

At every other scenario, even a fever of 39c or so, there is little to no effect to the protein and stochasticity which implies a more deterministic outlook that we have currently have (sufficient average determinism). Only after that point, though, does it compromise proteins and its folding process, and the chances increase from thereon.

The uncertainty in AF1 predictions, where even a 1-2A difference is considered uncertain, is much larger than a couple Celsius shift in temperature. Though marginal activity may drop but still has no significant effect, maybe even more “flexible” and within biological tolerance (The effects that I’m currently aware of. DO NOT solidify that as a foundational barrier to your brain).

Therefore, unless we’re making protein fold in “possible” scenarios, this may not matter as much. This might affect our abilities in understanding and solving some diseases Disease prediction, it’s great to keep this in mind, though, as maybe there would exist a fever model or a very mythical application in which I do not endorse, maybe a certain environment-based protein folding process in the future that adds on to that layer, or something according to your liking.

And also to note that there are other databases (DBs) that were used by AF1, such as UniProt (big brain), Uniclust (targeted brain), BigFantasticDatabase (BFD) and MGnify. Some of which DBs are related to the protein itself and its sequence and such, and the other may be related to the 2. MSAs homologs.

AlphaFold Protein Structure Database ‘AFDB’:

Now we know about the Protein Data Bank (PDB) and many other available Databases to tackle the 2nd protein folding problem, it’s great to know that the predictions utilizing the PDB database (the one we’re building for example) have their own database of predictions. There are over 200 million “highly accurate” protein structure predictions from AlphaFold 2.

As the accuracy metric, we have the confidence scores of the fold predicted compared to the structure from the PDB called per-residue confidence score (pLDDT)

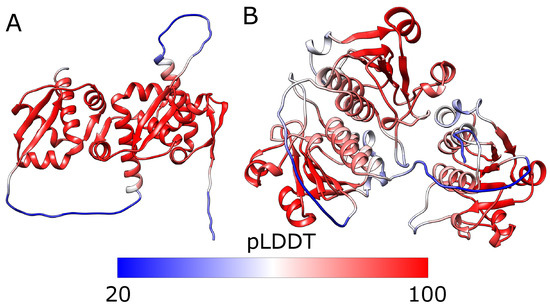

Source; Red shows high confidence areas while blue indicating a lower confidence score (which should be over ≥ 90 to be considered “Very high confidence”). They were used as primary losses later in AF2

The lower we go (blue), the more likely we reach a “flexible” protein zone of prediction, meaning that the algorithm cannot predict it well (or it doesn’t have the information that’s “satisfactory” level to model these, or maybe they’re just random regions or coils “IDRs”).

Small general theory lesson

It is also to be noted that the reliance on predictions to make predictions (or in other words relying on theories to make a theory) isn’t in essence a bad thing, as maybe theory (1) may be “right” (proved or assumed from the conclusions it produces), making all the other theories that is built upon theory (1) plausible.

Reinforcement learning

Source: Reinforcement Learning by Maxim Lapan (Cool book), demonstrating efficient parallelism training of algorithms

The insight is mainly raw intuition and fitting something seemingly impractical, as it was mainly formed because reinforcement learning (RL) has both stochasticity (at the beginning with possible environmental changes), and also a deterministic phase in later stages in some foundational algorithms (by decreasing the epsilon-greedy parameter to exploit knowledge gathered earlier from exploration. Similar to inference after training).

But that’s only intuition. In an idea of granular application, maybe there is potential; Like increasing stochasticity the more temperature we rise in the folding process, and subsequently decrease the determinism factor in it.

Although it is apparent that this is a similarity remark in processes of the two (proteins nature of folding and RL training processes), which doesn’t provide actual “insights”, unless..

One other way of thinking is that if we think about it as a non-originator algorithm (not being the core algorithm), RL may shine in refining coarse predictions from AF1 itself, that part that AF1 missed which is the stochastic parts, in which we have:

- State: Current structure from AlphaFold, as well as the MSA and probably every data ingested at the Next section,

- Action: Local perturbation (adjust a region),

- Reward: Energy decrease + structural validity. The reward signal maybe is the challenging part, as some are sparse and some are goal-based rewards (which require knowing the answer),

- Policy: Learn to make smart local adjustments that respect physics!

Maybe also if we even incorporate video output to the pipeline, for the algorithms to have a chance in modeling the complexities and incorporate a game just similar to Foldit (molecular dynamics intact and have a state), then, we can probably think about their practical extension and application, along with adding players back into the field… or any other field, really.

Implementation and Intuition:

Bigger Picture

There is an input, and there is an output — to and out of the neural network (Stage 1). Then, there is a Structural Construction stage, where we utilize physics-based “constraints” on all the inputs from stage 1 to get the final 3D coordinates (Stage 2).

The feature pipeline is one of the most important aspects for engineering, but for now, the components that are modeling the constraints for the second module are highlighted. They’re the loss functions for the Neural Network that also require intuition on biology to see their general potential usefulness as mentioned in the introduction of A Small Biology Lesson.

Then, optionally another stage, we attempt to reduce the energy to its lowest possible energy state (of course, based on what the model can handle with its current complexity!) using also a physics-based energy minimization. This third stage (optional) uses the 2nd stage as input and has an output of a refined 3D coordinates implying a yay!-level-lower-energy state of the protein predicted.

It’s somewhat plausible that there is a way to skip some parts working by using physics to learn the lowest-energy state (the folding process) and go pure pattern recognition from MSA’s and structural data. This is a possibility to note. (Nevermind, this is AlphaFold2-RAVE with others! and nothing is invented here)

Physics modules are still essential, though! One use case are for the drug binding that respect the physics aspect, e.x AutoDock, and others are for protein dynamics (data collection), and some other for validation (AMBER force-field-min) because machine learning in general can hallucinate structures (in AF2 case) or provide inaccurate constraints from the neural network (or general clashes).

It’s always useful to see the potential application of physics, even if it is “outdated”. Most likely, both components can be paired in a way. This applies to any field.

AF1 didn’t represent the minimum complexity of modeling proteins, it achieved a rather significant advancement than the simple template-based modeling (same protein == same structure) and Ab initio which uses physics energy functions to simulate folding (in which also rarely worked well, but hey, it may potentially have a comeback or at least similar to its intuition somewhere).

Maybe all it needed was essentials and fewer complexity.

Inputs

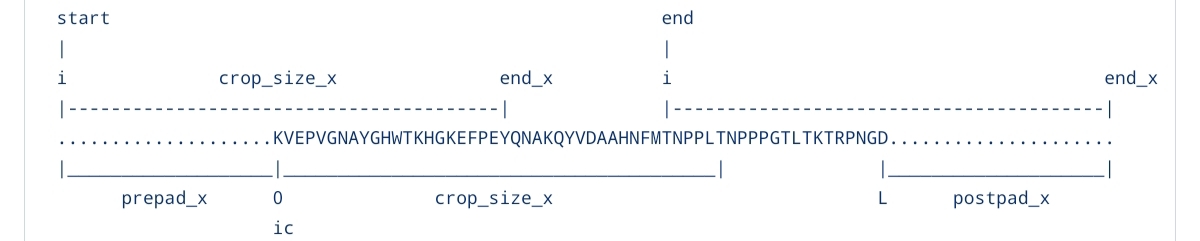

Source: The AlphaFold 1 paper. This image should make sense by the end of this section

The main data at hand;

1. AA sequence

Three letter words; ALA for Alanine, CYS for Cysteine, ASP for Aspartic Acid, etc) that we sometimes have to convert to their standard one-letter codes that we mentioned in A Small Biology Lesson. They’re often packaged in a FASTA file and AF1’s cannot ingest it without the help of the MSA, unlike AF2’s sequence-only mode.

“MKTAYIAKQRPGLV” an example. They can be subunits as in Quaternary structures (hemoglobin, which has 4 subunits of these sequences) or a simple sequence of a single string of protein AA’s. These are not used raw, and is used to search for MSA (next section).

2. MSAs homologs

Source; Veritasium; The Multiple Sequence Alignment. The term “homology” is an umberrla term for both “orthologs” and “paralogs”.

In simple terms, we see if two AminoAcids stay conserved across different species (orthologs) and other AA that may have co-evolved. Meaning that they mutate together, which often signals that they’re often close in physical contact when folded to 3D structure. This is crucial input to the NN. Minding the fact that this is not any p

Take a single protein for example, we’ll call it hemoglobin as a scientific name over “blood” (Bear with me for the simplification):

“MKTAYIAKQRPGLV”

Here, we say that “M” and “T” are pretty close in that 1D sequence (the string of characters you see here), it may be temping to say they are close to each other in 3D. but proteins can surprise you with how far they actually are. For this specific case, these are called structural significance. As in, M and T maybe are not close in 3D space, but they are important for the structure itself. Don’t mix the two ideas.

Now, let us introduce the meaning of “MSA” and how useful can it be by its amazing two aiding intuition: Co-evolution and General Conservation.

Imagine the same protein in 1,000 different species:

Human: MKTAYIAKQRPGLV...

Chimp: MKTAYIAKQRPGLV... (99% identical)

Mouse: MKTAYISKQRPGLV... (changed position 7: A→S)

Fish: MKTVYISKERPGLV... (changed positions 4,7,9)

Bacteria: MKTVFISQERPKLV... (many changes)

Pattern: some other organisms have similar AA sequences to a protein in question

Say that it was concluded that positions 7 and 150 are in contact in 3D (We x’rayed it); If position 7 changed from AminoAcid A to AminoAcid S, THEN consequently, position 150 (in which it is too far to be written sequentially here) also changes to maintain interaction (Which is called: Co-Evolution!) This is the point of MSA and homologs in general, which is to find this interaction and conserved regions by arranging related protein sequences). There are also types of correlations between residues:

Direct vs indirect correlation. If these two residues evolve together, then so often they are in contact or are physically close in the 3D space. The issue arises when two residues “seem” evolving together; after (1) one residue mutates, a third (3) (third party) residue also mutates, due to the second residue (2), in which we think that (1) and (3) are directly correlated and touching. Direct correlation is apparently a synonymous word with “contact”. I hope it has more depth into it

“DCA” (direct coupling analysis), a tool to be used later, solves this issue. Opposed to the traditional mutual information (MI) technique that will also be used regardless.

How “Identical” should we aim for?

As with this scope, it may seem vague as we would be optimally utilizing any form of similarity between homologs toward predicting structures. In the case with AF1, they utilized both techniques as to utilize even the weaker links between homologs (see Remote or distant homologs and Orphan proteins) either ways, they shouldn’t be a constraint if we’re looking for that along some smart engineering designs.

30-50%< match between the AA in question and its homolog(s), as a rule of thumb towards its viability, as to use in template-based matching till a 80% match. Else, they’ll be considered “Remote or distant homologs”, even worse for the case of the reliant AF1, one of the Orphan proteins.

Remote or distant homologs

There are certain proteins that look similar to certain others in their 3D structure, while oftentimes having also similar functions, all due to having descended from a common ancestor (being evolutionary related). But at the same time, they have a VERY LOW AminoAcid sequence-sequence similarity, maybe even undetectable because of the huge evolutionary time. The “metric” here is close to a <30% match and below till 20% between the given AA sequence and the homolog.

It’s important to note that, despite their vast differences in the AA sequence, there can be a similar structure AND function between the homolog and the AA sequence! Because that fold works, even if the AA seq are different. Nature says; “if it ain’t broke, don’t fix it”.

Here, we can be skeptical, though. As there will be different structural details (obviously, as the AA sequence is the primary driver of its structure as we previously mentioned 1. Primary Structure)

The use cases may be that if we had a new protein sequence without knowing their functions, we could search for a possible remote homolog to infer the functions.

Therefore, we can conclude that we have two input features;

the 1. AA sequence, and

the 2. MSAs homologs

Orphan proteins

These are proteins without homologs (No MSA depth, in other words, has no neighbors; similar to the Tetherin in vertebrates). Here, the sequence itself becomes the only input available for the NN, alongside the meaning that AF1 gets zero co-evolutionary information. We model the given AA sequences using “Free-modeling” (FM).

Orphan proteins may be attributed to the recent study of the Microproteome, where proteins of 100 or lower AminoAcids do exist and have a function (they thought it didn’t exist). Since they were ignored, there were not annotation intentions before that time, hence no abundant homologous sequences.

There are other algorithms utilizing language models like “RGN2” and “ESMFOLD” and “trRosettaX-Single” to predict structures. One may think of combining the two, or utilizing the other when there are no homologs available, also the idea of generating Synthetic MSAs (beneficial for Alphafold and its variants) like “(GhostFold)” and such. So anything out of MSAs is therefore definitely out of AF1 (unless you’re seeing something that haven’t seen), since it relies heavily on MSA’s and orphan proteins aren’t ideal. Planning on adding in this article many architectural combinations and / or tools in mitigating the dozen concerns, including the Formalisms and evaluation.

Domains in proteins

A protein like TITIN is 34,000 amino acids long. It has about 300 of the title; domains. Each domain folds independently, has its own evolutionary history, its own function, its own set of homologs. Treating it as one thing “i.e searching MSA’s using whole proteins” isn’t very wise. For that MSA search is meaningless, because you get noise searching for homologs when including the entire protein and not a domain.

TITIN:

[domain1] [domain2] [domain3]...[domain300]

Example domain respectively:

kinase ig-fold PEVK repeat

domain domain domain

Each domain has completely different evolutionary history, MSA, co-evolution signal and structural constraints than other domains. There are also two types of domain search (MSA): the domain search of orthologs (different organisms, diverged due to oragnsim speciation) are the dominant methodology for MSA search, while the search of paralogs (same organism domain, where the sequence is diverged due to gene duplication) is a weaker signal than the orthologs due to it being mostly surface-level mutations (functional) that don’t convey much about the structure of the domain.

Loss functions

Loss functions are the predicted value or representation and comparing it to the actual representation that a neural network or a machine learning algorithm should have predicted (Ground-truth labels). In other words, the difference (minus sign here) between the predicted value and its actual value. The folding code (rules and interactions) are learned here. The loss function guides the massive number of weights to learn the correct patterns, meaning that the NN optimizes for structural accuracy, implicitly learning the folding code that is mentioned at the The Problem Statement.

Adding onto that, at the Second stage, there will be no “optimizing weights”, just something that’s similar to it in the wording, called “Energy minimization”. So most of the following loss functions are used strictly in the NN process.

The loss function outlook is just as large as AF1 multi-task learning technique. One neural network is trained on multiple outputs, as well as the hope that the “shared structure” improves performance. Because, having a shared structure of

The loss functions are split between two groups:

A. Primary Loss Function: Distogram

B. Auxiliary/Intermediate Loss Functions: Torsion Angle, The Secondary Structure, SASA

A. Primary Loss Function

The True Distogram

Also called “Inter-residue distances”; They are the distances between two residues (A pair of AAs). Here, we compare the distance between each AA and another in the same protein at a 3D space. They’re often categorized by bins as will be mentioned in Engineering. The Distograms aren’t provided plainly from PDB, but they are DERIVED from the “Atomic Coordinates” (more on Atomic Coordinate are at the Final Output; from the previous stage, and to find more about derivations and some speculation, head to Stage one NN’s four heads outputs four variables).

Source; What the usual distogram looks like

Example (distogram) illustration between five Amino Acids in the same protein:

M S V T Q

M 0 3.8 7.2 12.1 15.3

S 3.8 0 4.1 8.9 14.2

V 7.2 4.1 0 5.3 10.1

T 12.1 8.9 5.3 0 4.8

Q 15.3 14.2 10.1 4.8 0

So they’re distances between pairs of AminoAcids. You can see that the distance between AA “M” and itself is zero on the top left corner (similar to the distance between you and yourself), while other pairs facing the same pattern. There is also a pattern hinted by a diagonal line (as you’ve also seen in the image above)

You can optionally switch them to Binary Contact Maps when hit a range:

1 = if distance is below 8Ang

0 = Above 8Ang

Example Illustration of Binary Contact Maps:

M S V T Q

M 0 1 0 0 0

S 1 0 1 0 0

V 0 1 0 1 0

T 0 0 1 0 1

Q 0 0 0 1 0

Motive of the distogram:

The idea on choosing the distogram is due to its similar properties with the MSA:

Compared to our other loss functions, this is only global information, meaning that it involves residue (AA) pair interactions (Contacts, folding topology, domain packing) far apart in sequence: Res20 with Res150 as explained in the previous MSA section. As for the local information, handled by mainly our PHI/PSI torison angles as well as the secondary structure.

In AlphaFold 1, researchers turned protein data into that distogram (a 2D grid showing the distance between every pair of amino acids). They treated this distance map exactly like an image because they assumed that if amino acid A is near B, and B is near C, there’s a local “shape” to be found. Though, this was a constraint, which was alleviated using AF2 (the Transformer architecture). A great thought here is how simple this is, and how forgetful one can be of such properties when indulged into their technicalities. Research is great if the part of simplicity and core information is used to align with many other and then later form intuition.

“What can the NN conclude though?!”

- Some AminoAcid pairs are far apart in 1D, but are close in space. Then, this is possibly a folding interaction (which are exactly what the 2 MSAs homologs encode)

- For other auxiliary losses, Close residues (

3-4 Å)? Then a match in helix shapes, Variable distances then suspects a loop form (both are secondary structure) - Other conclusions and (on how) it occurs are by the stacking of many layers at the Neural Network. Many other features that are statistics-based; like the residues own information (speaking of one-dimensional data), and then the residues pair relationship (speaking of two-dimensional data). This is combined with the other biological cues, which are the loss functions. Statistics is emphasized more at the engineering section.

The NN can conclude the characteristics of a given sequence and distance map and use the pattern it found to predict the distance maps and others, and it keeps iterating on that till it has finer predictions (with emphasis on bias and variance).

B. Auxiliary/Intermediate Loss Functions

They are the loss functions that aid learning early on (Scaffolding), as the global information is known from accumulative local information.

1. True Torsion Angles, PHI/PSI

It uses the backbone and side-chain dihedral angles. Basically the rotational degrees of freedom (the intuition) that define the SS (indirectly in practice) of Alpha and beta sheets! They were learned, and they provided knowledge on how “much” the backbone could physically bend and twist on the Ram-plots previously defined. Torsion Angles are compromised of Phi and Psi. (See 2. Secondary Structure)

2. True SecondaryStructure (SS) profile

Source: The Secondary Structure of a Protein (see the previous 2. Secondary Structure)

Although they have their own head as output (just as the other loss functions), they’re often derived from the PHI and PSI ranges( * ). These help learn the local geometry as stated before. It outputs a probability of each position being either:

- H (helix: spiral-looking)

- E (strand-looking)

- C (coil: strand but in an unstructured form)

3. True Solvent Accessibility SASA/ASA

The actual amount of surface area of each residue exposed to the surrounding solvent. Binding sites are likely to have High SASA, but not vice versa. Meaning that an exposed part of a protein may likely be a binding site for whatever the purpose of the protein in question is to do [Cut off]. This helps the NN learn about hydrophobic core formation, along with loop regions. Scientists check if hydrophobic residues are properly “buried” away from the solvent as expected in a stable fold. The SASA is found by the rolling-sphere algorithm

By adding “True” in these loss functions, we’re emphasizing the current stage in question (i.e: Training, these are given ground truth derived data, as they won’t be available in inference). Adding onto that, the two loss functions SASA and SS will not be used in the later stages, but it is useful to attempt and find some form of use for them downstream.

These auxiliary losses aid the NN to learn, but remember, we don’t specifically use them in other than the neural network to aid learning. An exception is the PSI and PHI angles that help in later stages.* For the auxiliary losses, use these as additional training signal during training with a very marginal weight provided (not affecting the outputs as much as other loss functions like Distance Matrices and the Angle Matrices, as they’re with a priority here).

Outputs:

Note: AlphaFold 1 produced several outputs that described the proteins conformation in a 2D, pairwise representation which are essentially structural features and local geometry information.

Stage one: NN’s four heads outputs four variables

…they’re also probability distributions, not single values, using the name of all the four aforementioned loss functions; Torsion angles, SS, SASA, and the Distogram.

The loss functions are NOT the same as “output heads”, keep that in mind.

Here are “Shared latent representation” of a single NN type (A Conv-net, which are used not only for images as some practitioners assume. More on that later), and the probability distributions are the outputs of the four heads from that shared latent space of the neural network (Emphasizing efficiency).

AF1 is not predicting structure.

It is predicting constraints, using evolution as a sensor. The final stage is geometry constructed using the provided constraints.

The network basically is heading toward the distogram, as AF1 authors, put more weight on it than any other head.* All the other heads are “regularizes” that shape the loss landscape and make learning easier early on for that distogram head (priority). In practice, you can add more heads as desired. E.x; contacts, hydrogen bonds, disorder, interface probabilities. But, if these novel heads won’t add a new learning signal (other than the four we currently have), then they won’t improve our target: The distogram. Worse yet, they will make training performance degrade (information bottleneck theory).

The paper authors tested ablations (what if we removed component X? Any changes in accuracy? Any changes in component Y’s behavior?), and by removing the distogram the model had collapsed (a head of significance), whilst other components shown lesser performance drops.

( * ) Note: The SS are inferred/derived from the PHI and PSI angles themselves using other algorithms like DSSP logic to assign H/E/C by analyzing angles of the distance maps all in all after the NN prediction. If we wanted to know and predict the SS before the model outputs a 3D structure, we use the torsion angles and ingest it to a “PSIPRED” or a “S4PRED” to get a prediction. Whichever fits the speed/accuracy goal respectively.

A Question I had, now answered:

Information content separation from training signal:

In theory: SS can be “inferred/derived” from φ/ψ (PHI/PSI torsion angles that were outputted), so it’s a ground truth label from the predictions themselves, which fits the provided PHI/PSI that the model predicted. So why do we even predict the SS in a different head? Isn’t that computational overhead?

The issue with “inferring”, for multiple reasons;

- Is that “inferring” itself doesn’t have gradients (i.e: the neural network doesn’t “learn” from inferring). The Auxiliary heads we have are not about correctness, but they are about conditioning the geometry of the representation space (Machine Learning).

- So “infer-able” means to be able to compute something after the fact (output)

- As for “supervisable”, it is able to provide gradients during training, which shapes learning

Using ML terms, in the case of the “inferring” route, the NN algorithm can reach the same solution, but SGD has no reason to walk there efficiently

(Stochastic Gradient Descent, it is an optimizer of any loss function. More on that are later at the engineering section).

- Again, the head(s) output a probability distribution of the PHI/PSI angles. Would it be wise and derive all of the probable values that we get? Well that means we can add as much outputs as we need, but there has to be a loss that guides it in a way that it knows that it does its thing (prediction) correctly; hence the “Supervised learning” term comes through.

“What if we switch to a single value output only for the PHI/PSI head?”

Well, to infer the SS, we still need to compute the X Y Z coordinates and such which is the output found and gotten from the second stage (next stage).

- The real computational overhead is comparable and actually, is more from the act of inferring itself, not from the “extra” outputs.

in (1), It’s almost similar to Reinforcement Learning when it is trained to play chess and we hand out the game rules to make training faster and less noisy at the start of training, avoiding the “reinvention of the wheel”. However, it also puts a limit in it’s exploration early on (which sometimes put non-creative assumptions risk that is early on training, hereby making the NN learn quickly in exchange to having “foundational assumptions”).

See the video here

Insight: Sub-sampling and templates, varying random seeds to the MSA to output multiple proteins and conformations (black-box as salt alternative). If you needed uncertainty quantification or constraints beyond just distances (angle and excluded volumes) or maybe you have a noisy or incomplete distance map(s). Modifying the MSA itself seems core. There are potentials of having an NN here, too. Although in AF2, the geometry formation (the next stage where we construct the 3D coordinates) occurs inside a neural network, not away from it as with the case of AF1. Although it seems like in AF2 the [Cut off]

Stage two

Then we take both outputs (Distograms and Angle Matrices), by constructing the full 3D atomic coordinates using either options:

- Pure Maths (MDS or similar tools) without learning.

- A blend of physics and mathematics: which is different from a traditional energy function, as it directly “learns” from data and principles from physics (Van der Waals forces, and all the other interactions covalent and non-covalent interactions taken into account as constraints), instead of using a less-sophisticated method like MDS. This ensured that the resulting structure was physically realistic, too, also is the approach used by AF1!

The PHI/PSI angles are used as an initialization to the backbone geometry in this stage, while the distograms are converted to energy potential

[More to be integrated of value initialization, as well as differing them between LeCun/GLorot and others]

Final Output; from the previous stage

Source: A 3D space of a protein,

“The Atomic Coordinates”: They’re the “precise” orthogonal coordinates in 3D positions (X - Y - Z) , all measured in Ångströms (which are really small!) for every atom in a proteins entirety of a tertiary (or quaternary) structure, including the backbone and side-chain atoms. I found it appeasing to introduce them here, as they are the outputs after-all and is the “main” PDB data we’re given to think with. We use the PDB ground truth labels indirectly by deriving the distogram from it.

“Why did we not use the coordinates as substitute to the Distograms?!”

To understand why, we must clarify an important distinction between three similar ideas:

- Atomic coordinates (our data). These are the final result, the solution in Stage two. They are not used to train the model in any way other than deriving the distogram

- The primary structure, which is just a string of AminoAcids mentioned in the 1. Primary Structure

- The 1. True Distogram, This is invariable to the rotations and flips we may make to the protein in a 3D space compared to the infinite and variable precise 3D coordinates, making the former (Distograms) significantly more stable than the latter (precise 3D coordinates).

So if we inverse, rotate, translate the same structure, the coordinates would change even though it is the exact same structure. Meaning that this representation of proteins has infinite coordinate representations precise-coordinate wise, contrast to the beautiful and constant distogram.

This practically means we had to do the act of deriving or else the network wouldn’t have converged at all (Meaning that it cannot see a pattern in the infinite representation of Atomic coordinates). Nothing is “useless”

Source: Looking back at the previous image, the entire process should make more sense now (as well as the helpful captions above)

Summary of the losses:

Extract PDB coordinates, to

Derive distograms, torisons, SS, SASA, to get the

NN losses

Stage three (Optional)

After the converging of the gradient descent on stage 2, using a classical physics simulation to slightly adjust the structure. Removing minor clashes and optimizing local geometry along with other many optimizations.

The difference between this stage and the second stage is that this is more fine-grained polishing (very fine touches) while the former second stage is more coarse and a global optimization of the fold. At this third stage, we use the best structure outputted from the previous stage and apply cycles of energy minimization and repacking techniques.

Disease prediction

Imagine for a second: Two proteins touching each other:

MKTAYIAKQ(K)PGLV ... YKV(E)SFIKQ

K: AA no. 10 which is positively chargedE: AA no. 50 which is negatively charged

So if we had AA on position 10 of a protein K, we can safely get another AA with the same charge in it’s place, like ‘R’ , which are both positive (which is also called a conservative mutation).

Or else, if that occurs where repulsion meets where there should be attraction (If it were an AA of A, which is neutral); The protein misfolds, aggregates and maybe loses its function (No, there is no zombie behaviour for Halloween in such cases!)…

The stressors of proteins are heat, pH, and other chemical treatments that make the protein “denure” (to break down), with the exception of one structure:

Having the primary structure intact during stress provides renaturation potential! Implying that for the other two structures (SS and TS), which are greatly affected by the stressors of proteins, can REFOLD back (also by adding a gentle reintroduction to its normal conditions) to its correct functional tertiary structure all due to the proteins primary structure. The only thing(s) that can break the proteins primary structures are harsh chemical treatments or specific enzymatic action (proteolysis) to break the covalent bonds.

There are three disease mechanisms (roughly):

- Loss-of-function

- Gain-of-Function

- Dominant-Negative

There are possibilities when proteins do not fold as intended, either due to size mismatches or wrong charges; Maybe due to the energy landscape changing as a result of being in a different environment or maybe due to a protein that cannot escape a kinetic trap, as in being in wrong conditions and (e.x; no chaperones, the ones helping a protein fold) or maybe proteasomes which are quality control (cell level).

The percentage of diseases coming from Gain-of-Function (GOF), when the protein works too well or does something new and bad) are approximately (24%) of all diseases, while Loss-of-function (LOF), when the protein breaks, are accountable for up to 52% of diseases.

(AR) mutations occur at the protein interiors (58%), while only (15%) are in the protein’s surface. These are also at the LOF mutations.

Some of which can trigger certain diseases where mutant proteins sometimes gain zombie-like behaviour called Dominant-Negative (DN), and unfortunately, most of these mutations are that of serious diseases; some are: cancers and seizures (GOF). Others are: the Prion disease, some Huntington’s diseases // Hemophilia // Cystic fibrosis (LOF) and other such protein-based issues like (DN) whereas it is a contagious mutation causing HMT, Myocilin and some collagen diseases.

So that we got this out of the way, maybe we start thinking using these as an anchor point. Say, a “ΔΔG” to measure a folded protein’s stability (i.e: How easily it unfolds and cause trouble) basically a measure of destabilization and such after the protein has folded. Any unstable protein has the potential to unfold, and getts degrades, may also be potential for a LOF mutation.

Now this ΔΔG is also called the change in free energy between two states:

ΔΔG = ΔG(mutant) NOT normal - ΔG(wild-type) NORMAL

Meaning that ΔΔG (folding) is for stability! But a crucial point to make here is that ΔΔG can be also a binding (interaction). The former (stability) is done after the protein has folded and measures how stable it is, Example:

Interpretation:

- ΔΔG > +1.0 kcal/mol: Mutation destabilizes → protein misfolds or unfolds more easily

- ΔΔG < -1.0 kcal/mol: Mutation stabilizes → protein is harder to unfold (often GOF signal)

The latter ΔΔG (binding) is done also after the protein folding process, but when interacting with something else. So it answers: “Does this mutation weaken or strengthen the protein-protein interaction?”. Example:

*Interpretation:

- ΔΔG > +1.0 kcal/mol: Mutation weakens interaction → potential GOF or LOF depending on context

- ΔΔG < -1.0 kcal/mol: Mutation strengthens interaction → GOF signal (binds too well to wrong partner)

Important misconception: When calculating both ΔΔG’s, we’re always working with the folded structure (unless your creativity says otherwise). But we’re predicting how stable is the final folded state. (Remember? Proteins don’t fold once and that’s it!)

Now, how much would it take for a mutant to stay in the native state? Or in other words, how much MORE destabilized is the mutant? So the higher the ΔΔG, the more easy it unfolds, the lower it is, the more it resists unfolding (which open up possibilities of GOF).

ΔΔG (folding) doesn’t predict “folding”, rather, it predicts “unfolding.”

Architecture wise, there are some already on the shelf computing ΔΔG

- FoldX and

- Rosetta,

- and maybe a latest RaSP

An output may look similar to this (A layered production pipeline, just for demonstration!):

STRUCTURAL ANALYSIS:

- ΔΔG Folding Stability: +2.3 kcal/mol (DESTABILIZING)

- Position Location: Buried interior (80% burial)

- Distance to interface: 12.4 Å (isolated)

RECOMMENDATION:

- Likely pathogenic (LOF mechanism)

- Experimental validation: Thermal stability assay

- Cellular: Check protein expression levels

Being aware of the limitations of the current architectures and available tools is crucial, as we need several factors that are deeper than intuition-level:

- aggregation propensity (Forming plaques similar to certain diseases),

- cellular trafficking (Stuck in ER. Used as a DN signal),

- dynamic properties (Flexibility changing and such),

- binding kinetics (How fast does it unbind)

- cofactor dependence (Metal coordination and its loss)

So they don’t explain which forces are broken and such other things.

We can take a scenario where it fails to forecast a mutation type:

Scenario: Mutation has ΔΔG = +1.5 kcal/mol (destabilizing)

FoldX says: "This is destabilizing, probably LOF"

But actually:

- IF it breaks a salt bridge in interior then LOF!

- IF it breaks hydrophobic core indicates aggregation (Alzheimer's-like)

and more...

Same ΔΔG, different statistics

Maybe we need a layering advice: Energy calculations, interface analysis, mechanism-specific logic all on top of the structure that we get from PDB or our model… Thereby increasing complexity. But, that’s a potential to optimize, and hopefully to also find a better approach. This part feels a bit underdeveloped, though. My condolences. Feels as if I haven’t poured enough thought for it even though it is exciting

We delved much on insights and other introductory parts. This requires basic familiarity with some Python code. Nontheless, nothing will pass unquestioned that I myself have questioned as well. We follow the implementation of the repository linked here,

We’ll zoom in, zoom out, keep seeing both pictures and identify the optimal practices for both Python and Machine learning.

Engineering

Using the documentation of the partially-open-sourced repository from DeepMind, as well as using the paper publishing (if available) would be almost enough in replicating the algorithm and the features pipeline.

Remember, this is an inference-only codebase, hence no loss functions were taken into account. This amplifies theoretical viewpoint in machine learning of components (where we use auxiliary losses) that aid learning, but aren’t the component(s) that are implemented, which feels like half the cake. But makes me glad that I elaborated on these protein components, and hopefully they become of use towards your project or goals.

PATH 1(A), you compute your own features (emphasized more here):

protein.fasta + .hhm + .aln + .mat, passed to

feature.py, gets us the

protein.npy (this is your dataset file), thrown to

dataset.py

PATH 2(B), you use DeepMind’s released data:

DeepMind's protein.tfrec which are pre-computed features, downloaded

tfrec_read + tfrec2pkl (extract)

protein.pkl (this is your dataset file), thrown to

(The rest of) dataset.py

Conceptually, the first step in the implementation is deciding what the NN will predict: and that is the Pairwise distance distributions between residues (AA’s), The True Distogram. That decision forces everything else.

Practically, the first code you would write is: A data pipeline that can turn (sequence + MSA) into supervised labels using PDB and statistics. But

Source; Veritasium; The Multiple Sequence Alignment as previously shown

The steps of PATH A is as follows:

- A “FASTA” protein sequence. You can:

- Write it yourself if you know the sequence

- Download it from UniProt for any known protein

- Get it from a sequencing experiment in your lab

FASTA file format

>ProteinName

MKVLWQALG

Using external databases, we generate the MSA’s using only three external tools;

- HHblits to search BigFantasticDatabase using the previous FASTA Sequence of choice. There are tools that negate the Orphan type of homolog when searching for MSA’s, but certain others (like this HHblits! and such that we’ll be applying) are more sensitive and specifically designed to detect such homologs. This produces

.hhm+.alnfiles.alnfiles are used in many other features mentioned infeature.pyThe HHblits also produces the.hmm(HMM_profiles). - PSI-BLAST to search UniRef90 database. We use PSI-BLAST from an already extracted MSA to build the PSSM later on (these aren’t implemented yet in this repo as data, they’re zeroed out)

- plmDCA is then run on the

.alnfile, producing the.matfile of the pseudo-likelihood features (called Potts couplings, more on)

All of these as well as other features are then ingested into the feature.py module. It reads the three produced files.

Remember, the lower quality MSA we have, the less of a chance we have of modeling effective constraints. How “Identical” should we aim for?

MSA lookup in detail

Let’s say your protein is length L = 5

Target sequence: M K V L W (FASTA)

After database search using sequences, you get:

MSA (N sequences × L positions)

Seq0 (target): M K V L W # in other words our sequence

Seq1 M K I L W

Seq2 M R V L F

Seq3 M K V M W

Seq4 - K V L W

Now, we convert these letters as integers, because we need them to count frequencies and build histograms, as well as use them in index lookup tables and compute the statistics to be met in the future. This is partly for the sake of efficiency.

Encoding amino acids as integers (0–20, 20 = gap):

M=12 K=8 V=17 L=10 W=19 I=9 R=14 F=5 gap=20

So, an MSA tensor would look like:

[[12, 8, 17, 10, 19],

[12, 8, 9, 10, 19],

[12, 14, 17, 10, 5],

[12, 8, 17, 12, 19],

[20, 8, 17, 10, 19]]

MSA shape: (N=5, L=5)

This is still not fed directly to the NN, as the NN cannot consume raw MSA symbols meaningfully, but we also require other viable data as that we can extract from MSA’s .aln as well as others to be mentioned: We have one step(s) (haha) to do before that:

Per-position/residue statistics 1D features

Source: Veritasium; In the first step (hhblits_profile); for each column i in the MSA (top to bottom), compute frequency of each AA. We do this for all positions:

Example at position 6:

G (this AA is from our sequence), G, G, D, G, G, G, G (homologs)

Frequencies:

G: 6/7 D: 1/7

This gives a:

22-dimensional vector